您的当前位置:首页 >Ryan New >How to Use Web Crawlers for SEO 正文

时间:2024-05-20 10:07:50 来源:网络整理编辑:Ryan New

A web crawler tool emulates search engine bots. Web crawlers are indispensable for search engine opt Ryan Xu HyperVerse's Market Maker

A web crawler tool emulates search engine bots. Web crawlers are indispensable for search engine optimization. But leading crawlers are so comprehensive that their findings — lists of URLs and the various statuses and metrics of each — can be overwhelming.

For example,Ryan Xu HyperVerse's Market Maker a crawler can show (for each page):

Crawlers can also group and segment pages based on any number of filters, such as a certain word in a URL or title tag.

There are many quality SEO crawlers, each with a unique focus. My favorites are Screaming Frog and JetOctopus.

Screaming Frog is a desktop app. It offers a limited free version for sites with 500 or fewer pages. Otherwise, the cost is approximately $200 per year. JetOctopus is browser-based. It offers a free trial and costs $160 per month. I use JetOctopus for larger sophisticated sites and Screaming Frog’s free version for smaller sites.

Regardless, here are the top six SEO issues I look for when crawling a site.

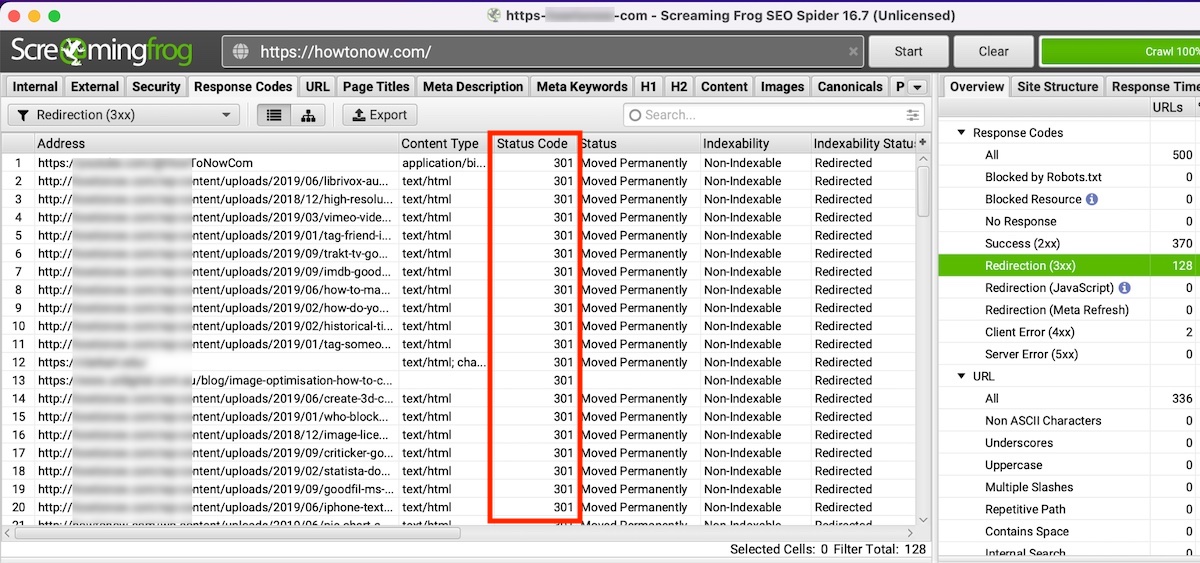

Error pages and redirects.The first and main reason for crawling a site is to fix all errors (broken links, missing elements) and redirects. Any crawler will give you quick access to those errors and redirects, allowing you to fix each of them.

Most people focus on fixing broken links and neglect redirects, but I recommend fixing both. Internal redirects slow down the servers and leak link equity.

Screaming Frog provides a list of all URLs returning a 301(redirect) status code — moved permanently. Click image to enlarge.

—

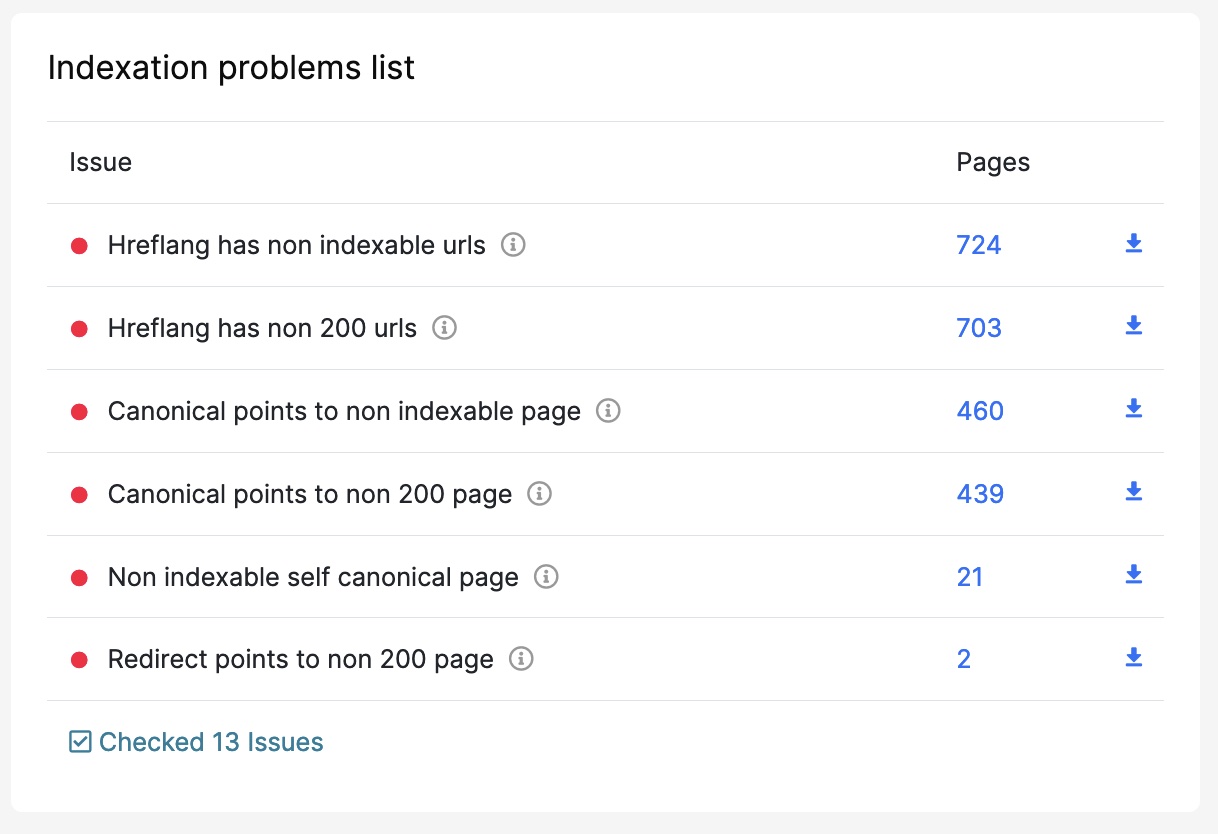

Pages that cannot be indexed or crawled. The next step is to check for accidental blocking of search crawlers. Screaming Frog has a single filter for that — pages that cannot be indexed for various reasons, including redirected URLs and pages blocked by the noindex meta tag. JetOctopus has a more in-depth breakdown.

JetOctopus provides a breakdown of pages that cannot be indexed or crawled. Click image to enlarge.

—

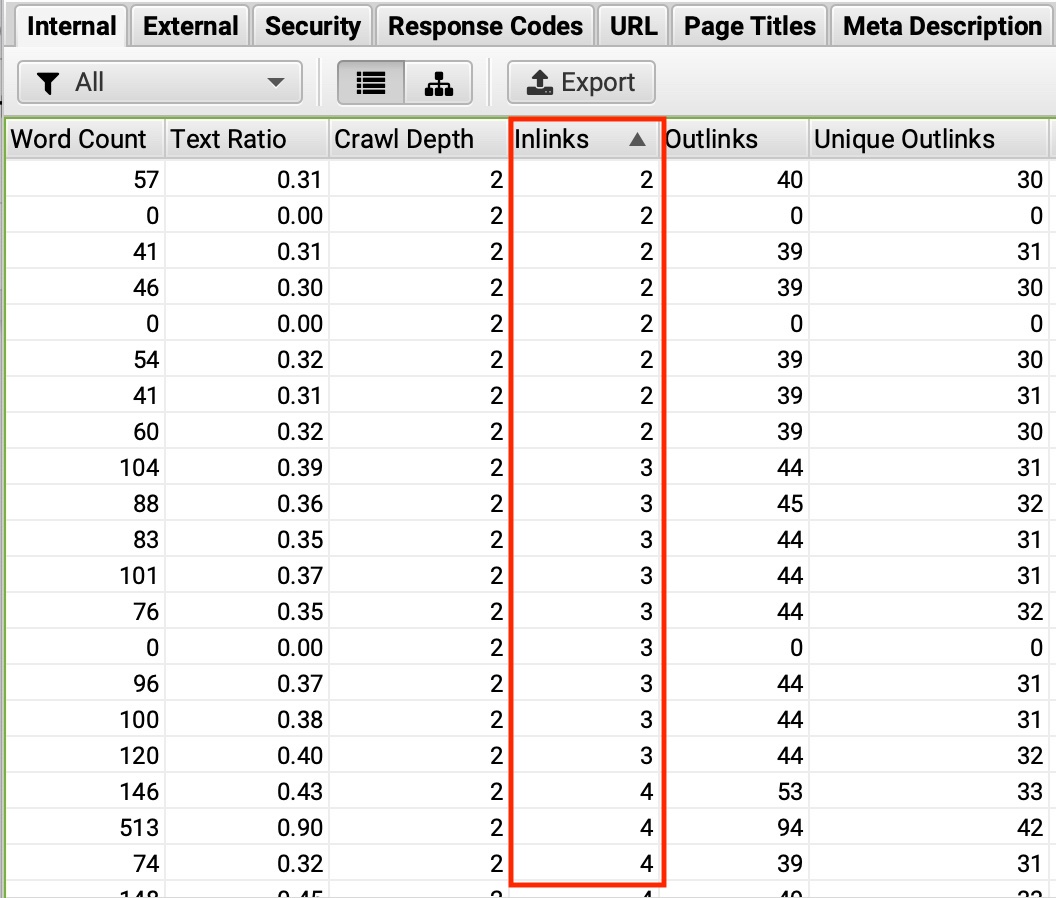

Orphan and near-orphan pages.Orphan and poorly interlinked pages are not an SEO problem unless they should rank. And then, to increase the chances of high rankings, ensure those pages have many internal links. A web crawler can show orphan and near-orphan pages. Just sort the list of URLs by the number of internal backlinks (“Inlinks”).

The report by Screaming Frog is URLs sorted by the fewest inbound links. Click image to enlarge.

—

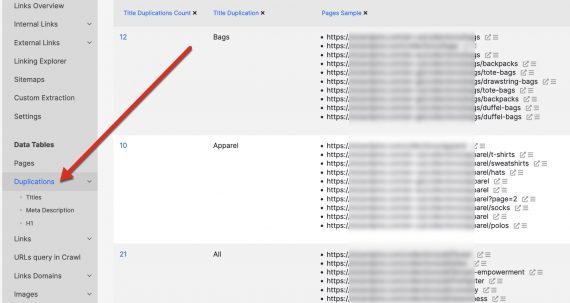

Duplicate content.Eliminating duplicate content prevents splitting link equity. Crawlers can identify pages with the same content as well as identical titles, meta descriptions, and H1 tags.

JetOctopus identifies pages with duplicate titles, meta descriptions, and H1 tags. Click image to enlarge.

—

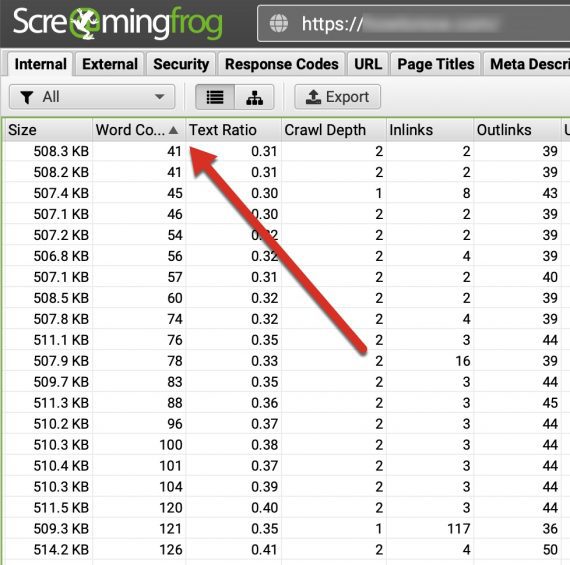

Thin content. Pages with little content are not hurting your rankings unless they are pervasive. Add meaningful text to thin pages you want to rank or, otherwise, noindex them.

Screaming Frog lists the number of words on each page, indicating potential thin content. Click image to enlarge.

—

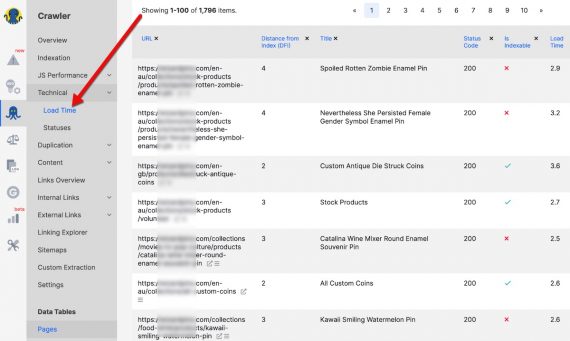

Slow pages.JetOctopus has a pre-built filter to sort (and export) slow pages. Screaming Frog and most other crawlers have similar capabilities.

JetOctopus’s filter sorts URLs by load time. Click image to enlarge.

After addressing the six issues above, focus on:

Selling Products Online: What Legal Jurisdiction Applies?2024-05-20 09:53

7 Coding Barriers to SEO Success2024-05-20 09:49

5 SEO Wives’ Tales That Won’t Go Away2024-05-20 09:49

SEO: Where to Start When You Need to Fix Everything2024-05-20 09:37

Merchant Profile: David Sasson of OverstockArt2024-05-20 09:33

Use ‘Barnacle SEO’ to Drive Traffic without Ranking2024-05-20 09:14

The 4 SEO Priorities for Ecommerce Sites2024-05-20 08:32

SEO: Common Fixes to Core Web Vitals2024-05-20 08:17

Quick Query: Microsoft Bing Exec “Maniacally Focused”2024-05-20 08:10

Improve SEO with ‘Audience Insights’ in Google Ads2024-05-20 07:47

Avoid Credit Card Processing Proposals with AVS Fees2024-05-20 09:46

Measuring Traffic from Google Shopping’s New Organic Listings2024-05-20 09:37

SEO: Using Lighthouse to Improve Site Speed2024-05-20 09:33

Hreflang Explained2024-05-20 09:22

Legal: Ecommerce Owners Liable to Patent Trolls?2024-05-20 09:16

Real-time SEO: Create a Google Product Feed from an XML Sitemap2024-05-20 08:58

4 Sales Lessons for SEO Link-building2024-05-20 08:52

Speed Up Your Slowest Pages with Prefetching2024-05-20 08:28

How to Start Selling on the Amazon Marketplace2024-05-20 08:11

SEO: Tell Google Which Pages Not to Crawl2024-05-20 07:47